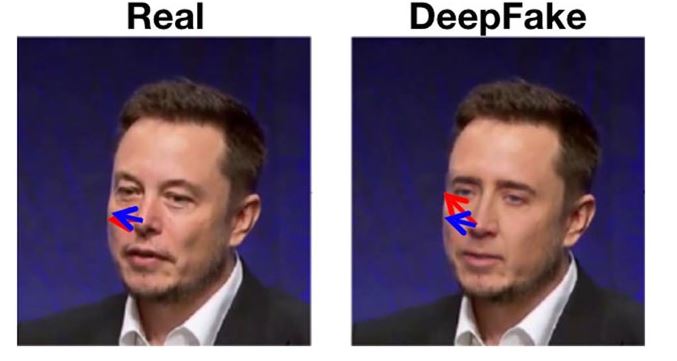

But Gardner said the tools widely available to make deepfakes lag behind the state of the art they require about five minutes of audio and one to two hours of video. Yisroel Mirsky, an AI researcher and deepfake expert at Ben-Gurion University of the Negev, said the technology has advanced to the point where it’s possible to do a deepfake video from a single photo of a person, and a “decent” clone of a voice from only three or four seconds of audio. Gardner said it’s still an expensive and time-consuming proposition to develop these tools, but using them is comparatively quick and easy. The term deepfake is shorthand for a simulation powered by deep learning technology - artificial intelligence that ingests oceans of data to try to replicate something human, such as having a conversation (e.g., ChatGPT) or creating an illustration (e.g., Dall-E). That’s why experts advise taking a few simple steps to protect yourself and your loved ones from the new type of con. Tools to weed out this latest generation of deepfakes are emerging too, but they’re not always effective and may not be accessible to you. Fraudsters can copy a recording of someone’s voice that’s been posted online, then use the captured audio to impersonate a victim’s loved one one 23-year-old man is accused of swindling grandparents in Newfoundland out of $200,000 in just three days by using this technique. Real-time deepfakes have been used to scare grandparents into sending money to simulated relatives, win jobs at tech companies in a bid to gain inside information, influence voters and siphon money from lonely men and women. There’s more to fear here than killer robots. In response to Professors Chesney and Citron's blog, Herb Lin, a senior cyber policy research scholar at Stanford University, suggested technology vendors could create "digital signatures" that are assigned to the purchaser.Īlthough, as Dr Lin pointed out, encrypted keys already inserted into digital cameras have been cracked.Technology and the Internet Column: Afraid of AI? The startups selling it want you to beĬhatGPT and other new AI services benefit from a science fiction-infused marketing frenzy unlike anything in recent memory. How do we authenticate content?Ī watermark or a digital "key" to identify authentic content could be a useful tool, suggested Dr Harandi.īut there has been little movement towards a global protocol on these matters. He suggested America's Defense Advanced Research Projects Agency should create a "secure internet protocol" to authenticate images. This way people will know whether the voice or image is real or from an impersonator," Congressman Ro Khanna told The Hill. "We all will need some form of authenticating our identity through biometrics. "Public trust may be shaken, no matter how credible the government's rebuttal of the fake videos." Teeth are notoriously hard to synthesise realistically.īut the technology will improve, and quickly.īeyond the morality of porn "deep fakes", an altered video of Donald Trump, for example, could have serious geopolitical consequences.ĭoctored videos could show politicians "taking bribes, uttering racial epithets, or engaging in adultery", suggested American law professors Bobby Chesney and Danielle Citron on the Lawfare blog.Įven a low-quality fake, if deployed at a critical moment such as the eve of an election, could have an impact. If you pay attention, you can see lip movements don't entirely match the speech. German researchers have controlled Vladimir Putin's face.ĭr Mehrtash Harandi, a senior scientist who researches machine learning at Data61, said the output of these deep-learning machines is often blurry. A public crisisīarack Obama has been made to lip sync. And so has the search for new ways to sort fact from fiction. The democratisation of machine learning has begun. "It was 100 per cent predictable," said Hany Farid, a computer science professor at Dartmouth College, who specialises in digital forensics.ĭr Farid thinks we are at a crossroads - we can still tell when videos have been doctored. Researchers use "Real-time Face Capture" on Russian President Vladimir Putin.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed